High-performance computing centers have always operated at the frontier of computational architecture. From vector systems to petascale and exascale platforms, HPC leaders understand that infrastructure decisions shape scientific competitiveness for years.

Quantum computing is now entering that same strategic layer. In our recent webinar, speakers focused on what quantum means specifically for HPC centers.

The central question is straightforward:

How should HPC centers approach quantum so that it strengthens long-term capability?

HPC centers exist to:

For HPC leaders, the key decision is how to embed quantum into the broader computational strategy in a way that builds institutional depth rather than temporary exposure.

Rented cloud access lets you run experiments, but owned infrastructure builds institutional capability and IP.

There is a fundamental difference between renting quantum compute time and embedding quantum into your infrastructure. Renting gives you results. Integration gives you control, continuity, and compounding expertise.

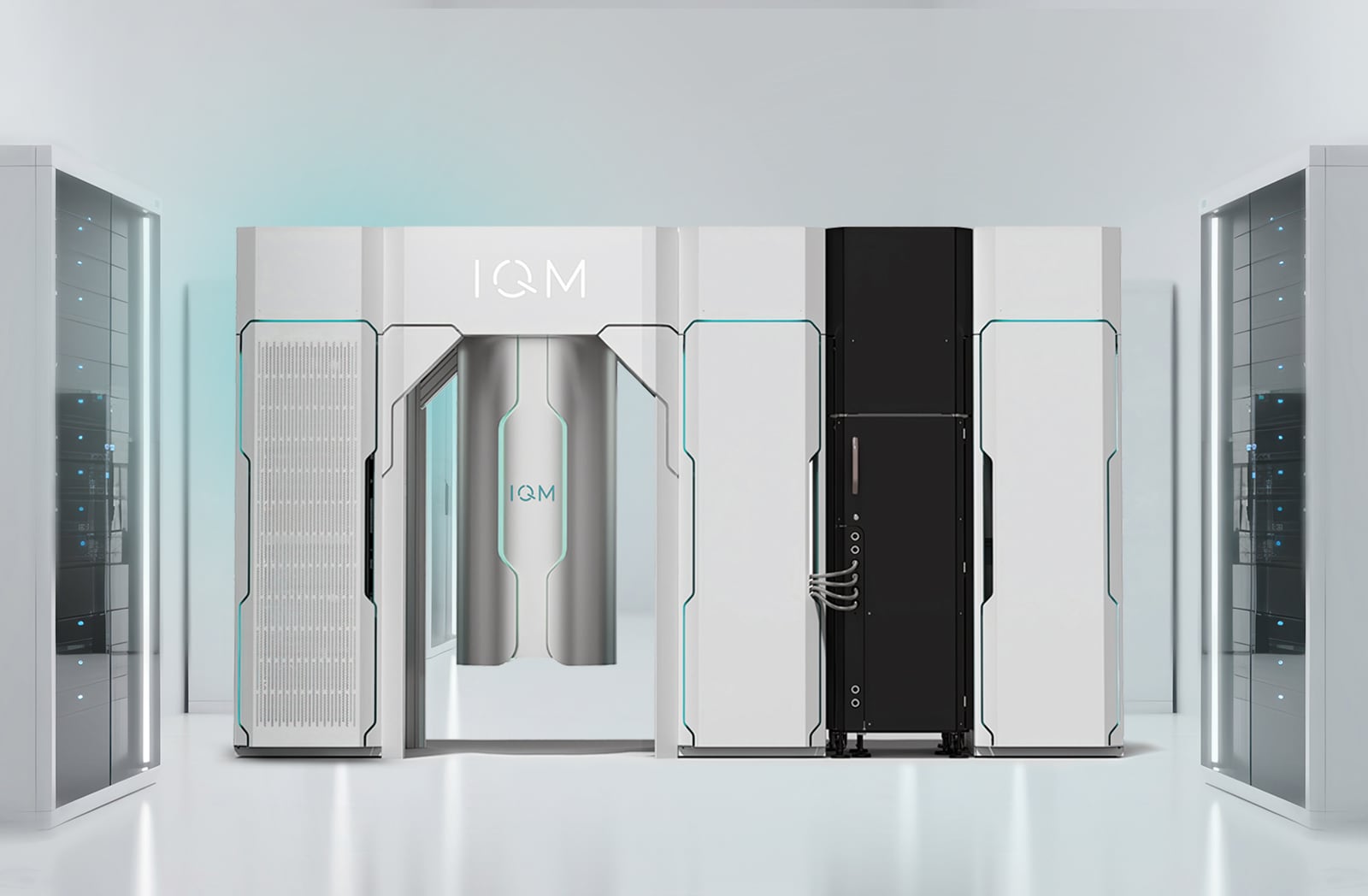

When a quantum system is deployed on-premises and integrated into an HPC environment, it becomes part of the architecture. Instead of API-level interaction, your researchers develop hands-on operational knowledge. Instead of adapting to a vendor’s roadmap, your institution shapes its own. Instead of limited access, you build infrastructure-level reliability, uptime management, and long-term research continuity.

Access expires, but capability compounds.

HPC centers that integrate quantum into their environment do more than test workloads. They build internal expertise, retain intellectual property, and create the foundation for future scaling toward fault tolerance.

When quantum systems are deployed within HPC environments, several strategic advantages emerge:

HPC centers act as national or regional anchors for computational research. The institutions that deploy and integrate quantum systems early become focal points for talent, partnerships, and applied research programs.

LRZ provides a clear example of this dynamic.

By integrating an on-premises quantum system within its HPC environment, LRZ moved beyond offering access to experimentation. It created a platform around which researchers, system architects, and application scientists can collaborate on hybrid workflows. That integration work strengthens internal expertise in scheduling, orchestration, and system optimization. Over time, that operational knowledge compounds.

An integrated quantum system allows LRZ to:

This is how ecosystems form. Infrastructure attracts researchers. Researchers develop methods. Methods generate IP and funding. Programs expand. The center becomes a regional capability hub.

LRZ becoming a repeat customer for IQM reinforces this trajectory. Institutions do not upgrade quantum systems for symbolic reasons. They upgrade when the capability built on the initial deployment justifies scaling. That scaling decision reflects accumulated expertise, validated workflows, and institutional commitment.

HPC centers play a central role in cultivating scientific communities.

When quantum systems are embedded within the center:

Platforms like IQM Resonance extend this learning pathway, offering cloud-based experimentation alongside institution-grade deployments. Cloud experimentation can serve as a gateway; on-prem integration strengthens long-term expertise.

Over time, expertise shifts from theoretical knowledge to operational fluency.

For HPC centers, the practical value of quantum lies in hybrid workflows:

These workflows require:

Open quantum systems designed for integration support these needs.

As hybrid research expands, operational familiarity becomes critical. Teams need insight into hardware behavior, error profiles, and scaling constraints. This understanding develops through sustained interaction with deployed quantum systems.

HPC leaders are accustomed to evaluating architectures across performance, power, cooling, scalability, and lifecycle evolution.

Quantum systems must be evaluated through a similar lens:

Systems designed with full-stack openness and HPC-native integration offer greater flexibility as research evolves.

Early architecture decisions influence future upgrade pathways.

HPC centers operate under rigorous performance and uptime expectations.

Quantum deployments must meet similar standards:

Operational continuity builds trust with researchers, which in return, encourages adoption.

Quantum adoption requires a staged infrastructure strategy. It develops institutional capability over time and aligns with existing HPC investment cycles. Based on the discussion in the “How to Buy a Quantum Computer” webinar, HPC leaders should consider a phased strategy:

Phase 1 – Exploration

The exploration phase focuses on building internal understanding and evaluating technical relevance. HPC centers use cloud-based access to prototype algorithms, test hybrid workflows, and train developers on real hardware. Internal teams identify candidate workloads and begin measuring classical–quantum coordination overhead. This phase establishes a factual basis for future infrastructure decisions.

Phase 2 – Deployment

The deployment phase integrates quantum into the HPC environment. This includes installing an on-premises system, preparing facility infrastructure, and integrating the quantum resource into existing schedulers and workflows. Operations teams develop hybrid job orchestration processes and establish system management procedures. Quantum becomes an operational component within the broader computing architecture.

Phase 3 – Capability Expansion

In the capability expansion phase, the center concentrates on performance optimization and technical depth. Teams conduct advanced error mitigation work, refine compiler and transpilation strategies, and tune system behavior for specific workloads. Collaboration with academic and industrial partners increases, and hybrid workflow efficiency improves through iterative optimization. Institutional expertise grows through hands-on system interaction.

Phase 4 – Scalable Evolution

The scalable evolution phase prepares the organization for larger systems and architectural progression toward fault tolerance. Infrastructure planning accounts for future system upgrades, increased qubit counts, and tighter classical–quantum control loops. Research roadmaps align with quantum error correction development and long-term hybrid architecture requirements. Quantum is embedded within the center’s strategic computing roadmap.

HPC centers have consistently shaped the trajectory of computational science. Quantum represents the next architectural frontier. The question now is how quickly hybrid computing environments will mature.

Centers that integrate quantum thoughtfully, invest in operational expertise, and align deployments with long-term research strategy will define the next generation of computational infrastructure.

We outline a phased approach for HPC leaders thinking about quantum adoption from exploration to scalable evolution grounded in the idea that capability-building is the real strategic asset.

Because in the end, quantum computing will not be defined by who accessed it first. It will be defined by who built it into their foundation.

Emilia Stuart is a content strategist and storyteller at IQM Quantum Computers, specializing in translating complex quantum computing concepts into engaging narratives. With a background in research and tech marketing, she understands potential customers and crafts stories that resonate. Emilia’s passion is making intricate technologies accessible to diverse audiences.

Search faster—hit Enter instead of clicking.